The OpenAPI and JSON Schema Divergence Problem

Update 2020-02-03: OpenAPI v3.1 will be using JSON Schema Draft

2019-09, so tooling vendors should get to work on upgrading support for JSON Schema 2019-09 and the other OpenAPI v3.1 changes.

This article is going to explain OpenAPI and JSON Schema divergence, which I’ve been calling the subset/superset/sideset problem. It’ll finish up explaining how we’re going to solve it.

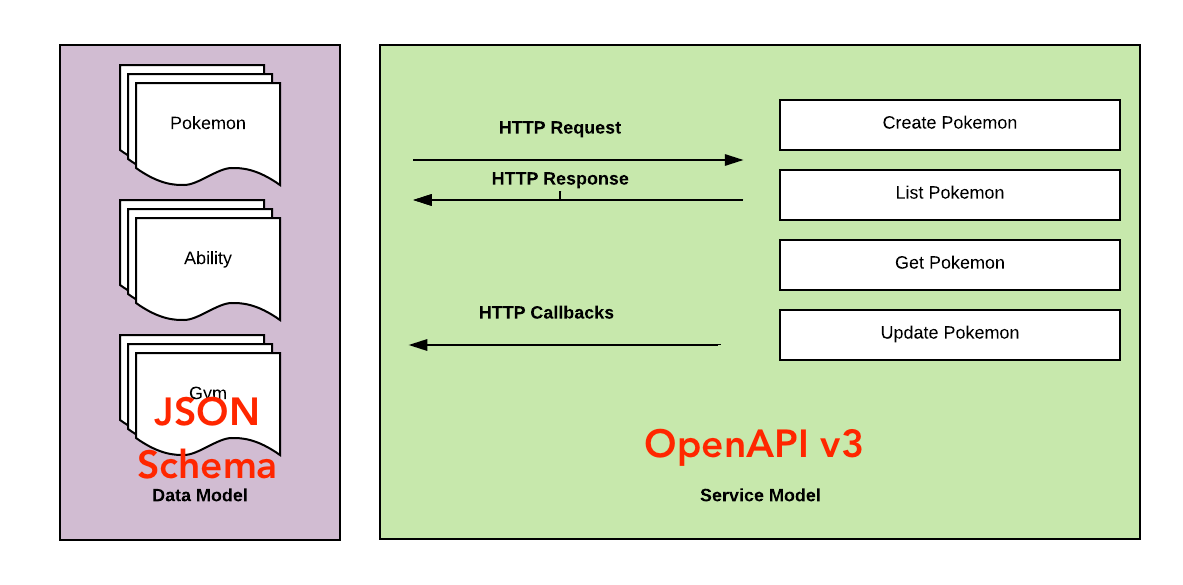

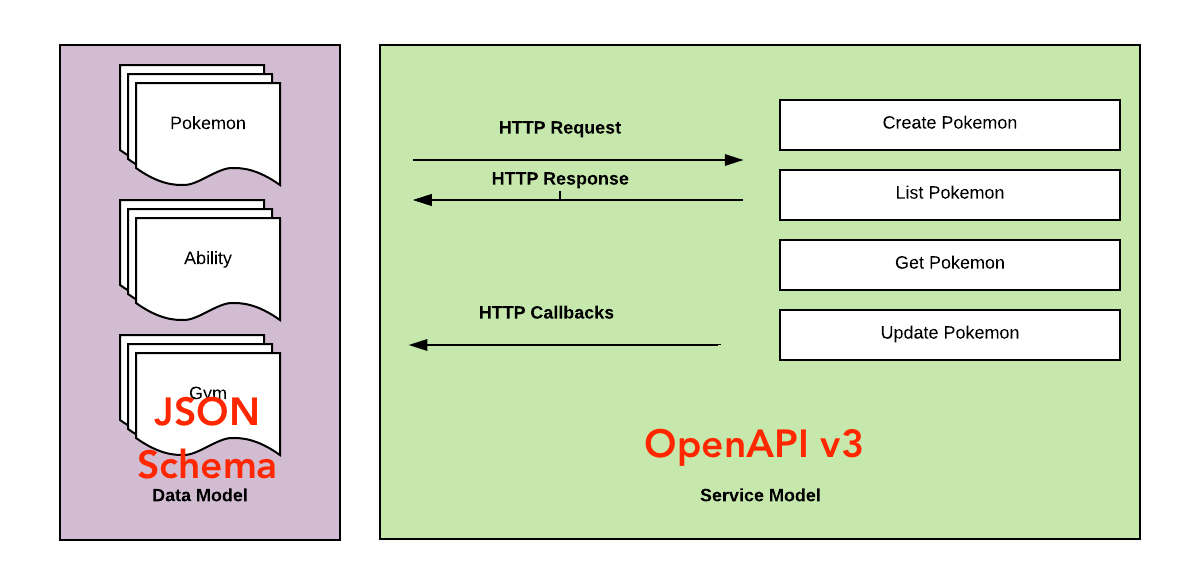

Whenever talking about API descriptions it is impossible to avoid mentioning OpenAPI and JSON Schema. They’re the two main solutions for any sort of API that doesn’t have a type system forcibly jammed into it by default.

Often you’ll need OpenAPI for one thing, and JSON Schema for another. OpenAPI has amazing API documentation

tools, fancy SDK generators, and handles loads of API-specific functionality that JSON Schema doesn’t even go near. It also has a focus on keeping this static, for strictly typed languages, where properties should be 1 type and 1 type only.

JSON Schema focuses on very flexible data modeling with the same sort of

validation vocabulary as OpenAPI, but for more flexible data sets. Whilst it doesn’t focus just on APIs, by using more advanced vocabularies like JSON

Hyper-Schema it can model a fully RESTful API and its hypermedia controls (HATEOAS). JSON Schema can offer server-defined client-side

validation, and a bunch of other fantastic stuff that OpenAPI doesn’t really aim to do.

Over the last year I’ve been chasing the perfect

workflow, and one of my main requirements when evaluating the common API specs was JSON Schema support. The situation overall was pretty bleak, not just in supporting JSON Schema, but a lot of tooling was just... not great.

Eight months after that article things are better, and the API design-first workflow has been

maturing around me, to a point where I’m really happy about most stuff!

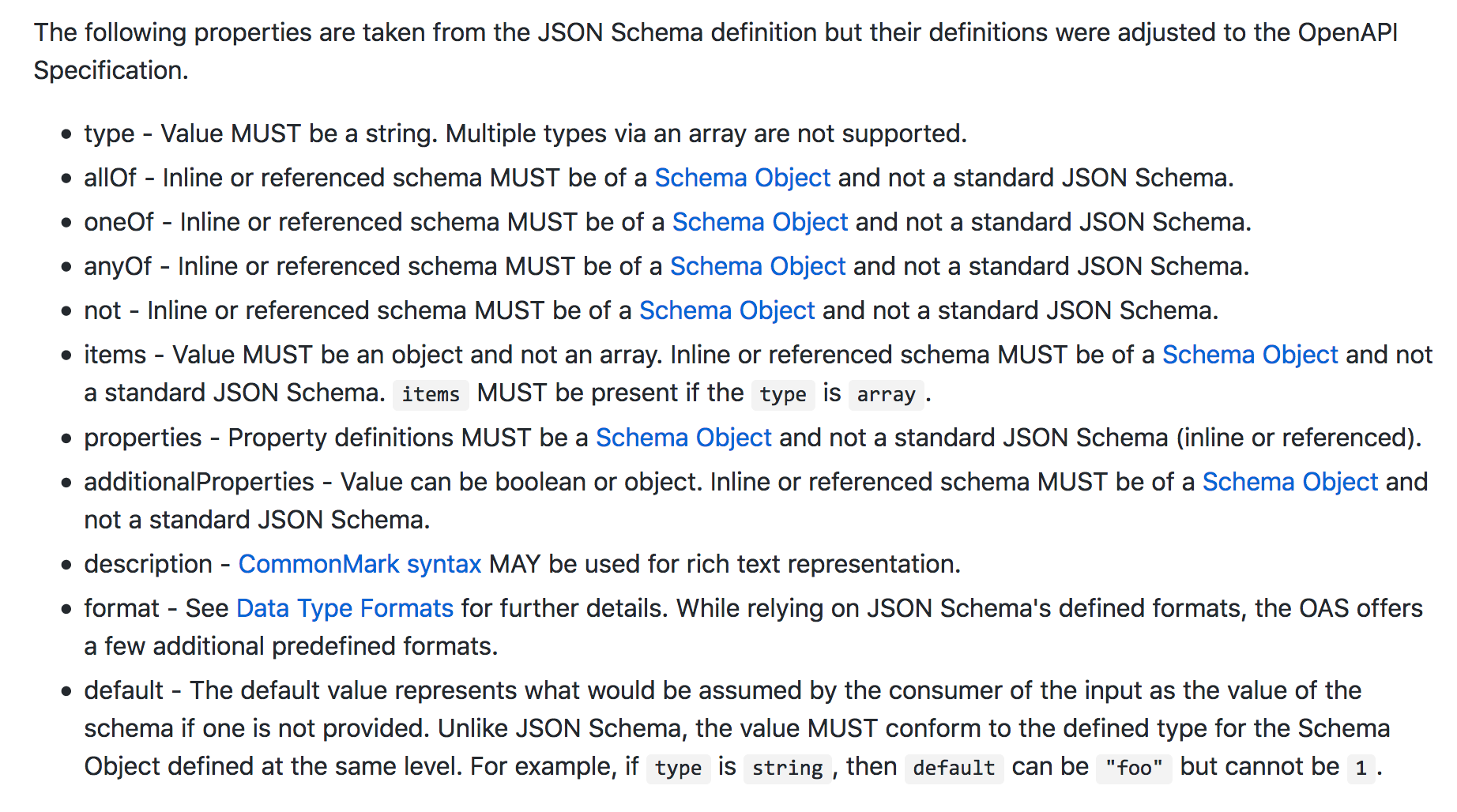

OpenAPI is often described as an extension of JSON Schema, but both specs have changed over time and grown independently. OpenAPI v2 based based on JSON Schema draft 4 with a long list of deviations, but OpenAPI v3 shrank that list, upping their support to draft v4 and making the list of discrepancies shorter. Despite OpenAPI v3 closing the gap, the issue of JSON Schema divergence has not been resolved fully, and with newer drafts of JSON Schema coming out, the divergence is actually getting worse over time. Currently OpenAPI is still on draft 5, and JSON Schema is on draft 7.

A list of caveats to the JSON Schema support in OpenAPI v3.0.

I’ve been punting this issue for a while in my articles and recommendations at work. The hope was that by the time folks at work had upgraded to v3, there might be a v3.1 out solving the situation, but that has not come to pass. Now I find myself suggesting folks find some way to convert one to the other, or try to write JSON Schema that is compatible with OpenAPI.

Carefully writing JSON Schema for your data model kiiiinda works

The latter can be done, but eventually you’ll get bit by something. At work we’ve been writing JSON Schema files, using them for contract testing and a bunch of other stuff, then rendering them as part of our OpenAPI Docs with ReDoc. ReDoc will let you use type: [string, null], but now we've got Speccy linting our packages, it's reporting that as invalid OpenAPI...

$ speccy lint docs/openapi.ymlSpecification schema is invalid.

#/paths/~1foo/post/requestBody/content/application~1json/properties/user_uuid

expected Array [ 'string', 'null' ] to be a string

expected Array [ 'string', 'null' ] to have type string

expected 'object' to be 'string'

If I change that to valid OpenAPI and use type: string with nullable: true instead, validators like Speccy will be happy, but my JSON Schema contract tests will break as they no longer know that null is an acceptable value for that field.

This error was the final straw for me. At work I have been recommending everyone enable Speccy on CircleCI to (amongst other things) make sure we’re writing valid OpenAPI, and I am failing to write valid OpenAPI in an API I manage. I’m also a little tired of explaining this awkward difference to people who would like to use some JSON Schema-based tools.

After grumping at Darrel Miller (a contributor to OpenAPI) and others on the APIs You Won’t Hate slack, some good ideas started to pop up. Darrel is going to try and draft up an extension to OpenAPI that could theoretically end up in a future version — like 3.1 or 4.0:

x-oas-draft-alternate-schema:

schemaType: json-schema

schemaRef: ./real-json-schema.json

This would allow support for various JSON Schema drafts, and any other data model you can think of; including protobuf. The decisions of which data model formats to support would be in the hands of tool vendors. Of course this would increase work for these vendors, and decrease portability for a while as you can only use tools that support your alternate schema, but ultimately solve a lot of problems.

There’s some stuff to flesh out, and obvious limitations around which schemas can $ref which other schemas, but there is definitely a solution here. It’ll take some time to get it done, and in that time we all need a solution.

One approach would be trying to use OpenAPI for all the things, write validators and RSpec tools that do this, but we’d have to be 100% OpenAPI for everything, and we’d never get to play with client validation, Hyper-Schema, etc.

Another approach would be converting our OpenAPI models to JSON Schema, but that seems a bit lossy. OpenAPI v3 is based on JSON Schema draft v5, and at time of writing JSON Schema is up to draft v8... This also adds a build step that gets in the way. If you are using JSON Schema for your contract testing, with something like thoughtbot/json_matchers, you would need to edit your OpenAPI model, run the conversion, then run the tests. Or crowbar a conversion into your test suite, meaning the tool handling the conversion needs to be written in that specific language... or pipe a shell command to the CLI... AGH RUN AWAY.

No, I think making JSON Schema (latest possible draft) the one and only source of truth for the data model, then "downgrading" to a flavour of JSON Schema that OpenAPI likes, is going to be the way to go.

Anyway, gonna keep at this until I come up with some solutions.

Part Two: Problem Solved!